Difference between revisions of "Data Linkage Table"

| (22 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

The | The '''data linkage table''' is the first components of using a [[Data Map|data map]] to organize [[Research Data Work|data work]] within a [[Impact Evaluation Team|research team]] . A '''data linkage table''' lists all the datasets in a particular project, and explains how they are linked to each other. It allows the '''research team''' to accurately link all datasets associated with the project in a [[Reproducible Research|reproducible manner]]. This is particularly useful when projects last multiple years. Over time, members of the research team may change, and new data could be [[Primary Data Collection|collected]] or [[Data Acquisition|acquired]]. In such cases, documenting the various datasets using a '''data linkage table''' can help resolve errors in linking datasets. | ||

== Read First == | == Read First == | ||

* A '''data map''' is a template designed by [https://www.worldbank.org/en/research/dime/data-and-analytics DIME Analytics] to organize 3 main aspects of [[Research Data Work|data work]]: [[Data Analysis|data analysis]], [[Data Cleaning|data cleaning]], and [[Data Management|data management]]. | * A '''data map''' is a template designed by [https://www.worldbank.org/en/research/dime/data-and-analytics DIME Analytics] to organize and document the 3 main aspects of [[Research Data Work|data work]]: [[Data Analysis|data analysis]], [[Data Cleaning|data cleaning]], and [[Data Management|data management]]. | ||

* | * A [[Data Linkage Table|data linkage table]] is one of three components in a [[Data Map|data map]]. | ||

* The '''data linkage table''' | * The '''data linkage table''' is the best way to communicate to current and future team members what data will be used and how it may be linked. | ||

* The '''data linkage table''' helps resolve errors in linking large and complex datasets. | |||

== | == Overview == | ||

The purpose of a '''data linkage table''' is to allow the [[Impact Evaluation Team|research team]] to accurately and [[Reproducible Research|reproducibly]] link all datasets associated with the project. Errors in linking datasets are fairly common in development research, particularly when there are several rounds of [[Primary Data Collection|data collection]] involved, or while using multiple [[Secondary Data Sources|secondary data]] sources. | |||

The data linkage table | The '''data linkage table''' allows the team to plan out the "data ecosystem" of the project before data has even started to be acquired. This helps the team make sure that the data sets that will be acquired have the ID variables needed combine multiple dataset, minimizing the risk that two data sets at all cannot be combined or cannot be combined accurately. It also makes the team agree on names for each dataset so two similar datasets are not confused when discussed by the team. Furthermore, it also documents important meta information such as where the raw data is stored in the project folder and where it is backed up. | ||

It is also important to note that the '''data linkage table''' should only list the original datasets, and not any datasets that are derived from them. For example, if the research team collects [[Primary Data Collection|primary data]], the data linkage table should only include the raw data, and not the [[Data Cleaning|cleaned version]] of the data. Similarly, in the case of [[Administrative Data|administrative]] or [[Data Acquisition|data acquired]] through '''web-scraping''', the table should not include combinations or reshaped versions of those datasets. As a '''best practice''', the research team should simultaneously [[Data Documentation|document the code]] which creates derivatives of the datasets that are listed in the data linkage table. This allows the research team to trace the derived datasets back to the original dataset. | |||

The '''data linkage table''' should not be a static document after it has once been created. It should reflect the research teams best understanding at any given time of the datasets that will be used in the project. If the team learns something new about any of the data sources, or realizes that halfway through the project that a another data source is needed, then the data linkage table should be updated. The overview the table gives the team also makes it easy to spot if this new development has any consequence for any other dataset in the table and then that can promptly be addressed. | |||

== Template == | == Template == | ||

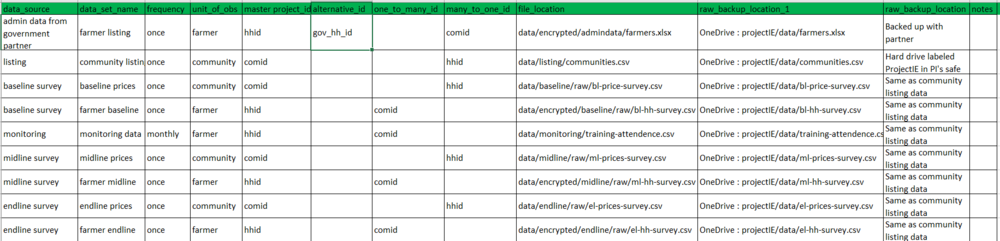

[https://www.worldbank.org/en/research/dime/data-and-analytics DIME Analytics] has created this [https://github.com/Avnish95/econ-101/raw/master/Template.xlsx downloadable template] to create a '''data linkage table'''. Given below is an example of a '''data linkage table''' that has been filled out. | [https://www.worldbank.org/en/research/dime/data-and-analytics DIME Analytics] has created this [https://github.com/Avnish95/econ-101/raw/master/Template.xlsx downloadable template] to create a '''data linkage table'''. Given below is an example of a '''data linkage table''' that has been filled out. | ||

[[File: | [[File:linkagetable.png|1000px|thumb|left|'''Fig. : A sample template for a data linkage table''']] | ||

| Line 32: | Line 37: | ||

== Related Pages == | == Related Pages == | ||

[[Special:WhatLinksHere/Data_Linkage_Table|Click here to see pages that link to this topic]]. | |||

[[Category: Reproducible Research]] | |||

Revision as of 14:24, 13 April 2021

The data linkage table is the first components of using a data map to organize data work within a research team . A data linkage table lists all the datasets in a particular project, and explains how they are linked to each other. It allows the research team to accurately link all datasets associated with the project in a reproducible manner. This is particularly useful when projects last multiple years. Over time, members of the research team may change, and new data could be collected or acquired. In such cases, documenting the various datasets using a data linkage table can help resolve errors in linking datasets.

Read First

- A data map is a template designed by DIME Analytics to organize and document the 3 main aspects of data work: data analysis, data cleaning, and data management.

- A data linkage table is one of three components in a data map.

- The data linkage table is the best way to communicate to current and future team members what data will be used and how it may be linked.

- The data linkage table helps resolve errors in linking large and complex datasets.

Overview

The purpose of a data linkage table is to allow the research team to accurately and reproducibly link all datasets associated with the project. Errors in linking datasets are fairly common in development research, particularly when there are several rounds of data collection involved, or while using multiple secondary data sources.

The data linkage table allows the team to plan out the "data ecosystem" of the project before data has even started to be acquired. This helps the team make sure that the data sets that will be acquired have the ID variables needed combine multiple dataset, minimizing the risk that two data sets at all cannot be combined or cannot be combined accurately. It also makes the team agree on names for each dataset so two similar datasets are not confused when discussed by the team. Furthermore, it also documents important meta information such as where the raw data is stored in the project folder and where it is backed up.

It is also important to note that the data linkage table should only list the original datasets, and not any datasets that are derived from them. For example, if the research team collects primary data, the data linkage table should only include the raw data, and not the cleaned version of the data. Similarly, in the case of administrative or data acquired through web-scraping, the table should not include combinations or reshaped versions of those datasets. As a best practice, the research team should simultaneously document the code which creates derivatives of the datasets that are listed in the data linkage table. This allows the research team to trace the derived datasets back to the original dataset.

The data linkage table should not be a static document after it has once been created. It should reflect the research teams best understanding at any given time of the datasets that will be used in the project. If the team learns something new about any of the data sources, or realizes that halfway through the project that a another data source is needed, then the data linkage table should be updated. The overview the table gives the team also makes it easy to spot if this new development has any consequence for any other dataset in the table and then that can promptly be addressed.

Template

DIME Analytics has created this downloadable template to create a data linkage table. Given below is an example of a data linkage table that has been filled out.

Explanation

Given below is a brief explanation of the purpose and contents of each column in the data linkage table:

- data_source. Where does this data come from? It could either be a data acquisition activity like a survey, or the name of a partner organization that is providing your research team with the data.

- data_set_name. What is this dataset called in the research team? Make sure that all datasets have unique and informative names, and make sure that everyone in the team uses these names so that there never is any confusion.

- frequency. How often is this data collected? This could be “once”, which would be the case, for example, for a baseline survey. If you run the same survey at two distinct points in time, for example, a baseline and an endline, then count these as separate datasets, each with frequency once. For all other types of data acquisition that is not a single discrete activity this column will indicate what the frequency is, which could be anything from hourly, daily to monthly.

- unit_of_obs. What is the unit of observation for the dataset? I.e. what does each row represents? All categories in this column should have a corresponding master data set.

- master_project_id. What is the name of the ID variable used in this project to identify each unit, i.e. each row, in this dataset. This id should be uniquely and fully identifying.

- alternative_id. List any other IDs that is used to identify this unit of observation. For example IDs used by partner organizations. While a research team should only use one ID per unit of observation per project (i.e. the master_project_id), it is still common that multiple IDs for the same unit of observation occurs in the same project due to, for example, partner organizations having their own ID. Typically we never want to use an alternative ID used by someone else, as then anyone else using that ID can re-identify our data. A project that uses someone else’s ID should consider their data as identified, even if all identifiers are removed during de-identification.

- one_to_many_id. Which (if any) other project ID variables does this project variable merge one-to-many to? For example, if this dataset had the unit of observations “school” and the project also has student datasets, then this column should include the student ID variable as each school merge to many students. Each ID listed here should have a master dataset.

- many_to_one_id. Which (if any) other project ID variables does this project variable merge many-to-one to? For example, if this dataset had the unit of observations “student” and the project also has school datasets, then this column should include the school ID variable as many students merge to the same school. All ID variables listed here must also exist in the master dataset for the unit of observation of the dataset. So in the students/school examples, the student master dataset must include the school ID variable. Each ID listed here should have a master dataset.

- file_location. This is the file path in the projects shared file system for where the dataset is stored. If this data is not stored in a shared file system and, for example, is pulled from the internet each time the code runs, then the URL should be listed here together with other information related how to access the data.

- raw_backup_location_1 and raw_backup_location_2. Where are these data sets backed up? Be as detailed as possible. The one day you may need this information you will be very thankful for any information that you will have available. List things as storage type (hard drive, cloud etc.), full file path and name. Remember that these files need to be encrypted if they include identifying information (which is almost always the case with raw data), so you should save decryption instructions here as well. (Although you should save the decryption key in a more secure location.)

- notes. A column with notes to a specific dataset. If the same type of note appears for many datasets, consider adding a new column for that type of information