Difference between revisions of "Data Management"

Kbjarkefur (talk | contribs) |

Kbjarkefur (talk | contribs) |

||

| Line 21: | Line 21: | ||

These master data sets should have time-invariant information, for example ID variables and dummy variables for being included in different data sets. We want this data for all observations we encountered, even observations that we did not select during sampling. This is a small but important point, read [[Master Data Set|master data set]] for more details. | These master data sets should have time-invariant information, for example ID variables and dummy variables for being included in different data sets. We want this data for all observations we encountered, even observations that we did not select during sampling. This is a small but important point, read [[Master Data Set|master data set]] for more details. | ||

== ID Variables == | |||

All observations in all data sets should always be identified with the value in a variable that fulfills the property of an ID variable. The main [[Properties of an ID Variable|properties of an ID variable]] is that it should be uniquely and fully identifying, constant across a project, constant throughout the duration of a project and it should be unique to our project. The last one is only relevant if | |||

*All data sets should have a fully and uniquely identifying ID variable. | *All data sets should have a fully and uniquely identifying ID variable. | ||

Revision as of 21:41, 6 February 2017

Due to the long life span of a typical impact evaluation, where multiple generations of team members will contribute to the same data work, clear methods for organization of the data folder, the structure of the data sets in that data folder, and the identification of the observations in those data sets is critical.

Read First

- Never work with a data set where the observations do not have standardized IDs. If you get a data set without IDs, the first thing you need to do is to create individual IDs.

- Always create master data sets for all unit of observations relevant to the analysis.

- Never merge on variables that are not ID variables unless one of the data sets merged is the Master data

Guidelines

- Organize information on the topic into subsections. for each subsection, include a brief description / overview, with links to articles that provide details

Organization of Project folder

Changes made to the data folder, affects the standards that field coordinators, research assistants and economists will work in for years to come. It can't be stressed enough that this is one of the most important steps for the productivity of this project team and for reducing the sources of error in the data work.

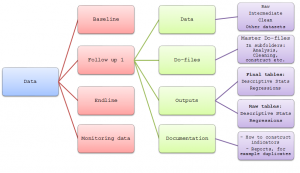

Most projects have a folder on a synced folder, for example Box or DropBox. The project folder might have several folders like, government communications, budget, concept note etc. In additions to those folders you want to have one folder call data folder. All files related to data work on this project should be stored in this folder. Setting up the folder for the first time is the most important stage when setting the data work standards for this project. This folder should include master do-files that are used to run all other do-files, but are also used as a map to navigate the data work folder. Some folders have specific best practices that should be followed. A well structured project should also have clear naming conventions.

Master data sets

As the project folder grows bigger and more complex, we need tools to make sure that there are no discrepancies on how we identify observations in the first year of a project relative to the last year of a project. Also, in order to efficiently have an overview of the observations in our project and what data we need to have one data file that stores exactly this information. This type of data set is called a master data set and a typical impact evaluation requires a few of them. For each unit of observation that is relevant to the analysis (respondent in survey, level of randomization, see master data set article for more details) we need a master data set. Common master data sets are household master data set, village master data set, clinic master data set etc.

These master data sets should have time-invariant information, for example ID variables and dummy variables for being included in different data sets. We want this data for all observations we encountered, even observations that we did not select during sampling. This is a small but important point, read master data set for more details.

ID Variables

All observations in all data sets should always be identified with the value in a variable that fulfills the property of an ID variable. The main properties of an ID variable is that it should be uniquely and fully identifying, constant across a project, constant throughout the duration of a project and it should be unique to our project. The last one is only relevant if

- All data sets should have a fully and uniquely identifying ID variable.

- Two variables are ok, but it should be avoided in most cases. One case where multiple ID variables is good practice is panel data set, where one ID is, for example, household ID and the other variable is time.

- After we have created IDs in the master data sets, we can then drop all identifying information that we do not need for the analysis in all other data sets that we have copied the ID to.

Additional Resources

- list here other articles related to this topic, with a brief description and link