High Frequency Checks

Before starting with data collection, the research team should work with the field team to design and code high frequency checks, as part of the data quality assurance plan. The best time to design and code these high frequency checks is in parallel to the process of questionnaire design and programming. These checks should be run every time new data is received, to provide real-time information to the field team and research team for all surveys.

Read First

- Along with back checks, High frequency checks (HFCs) are an important aspect of the data quality assurance plan.

- Before launching the survey, carry out a data-focused pilot to plan for high frequency checks.

- IPA has created a Stata package called

ipacheckto carry out high frequency checks on survey data.

General Principles

While survey firms often conduct internal quality checks, it is important for the research team to conduct their own list of high frequency checks to improve the quality of research outputs. For this purpose, DIME has proposed the following four general principles for conducting quality checks on various data sources:

Completeness

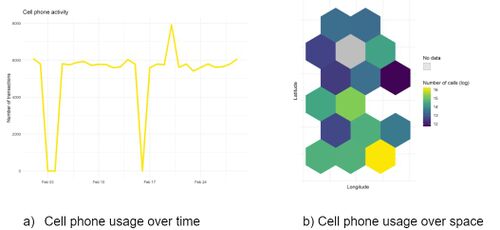

Compare collected responses with the sample to make sure all data points actually appear in the dataset. Verify that there are no duplicate observations. Check whether the data covers all timeframes (in case of time series data), all locations (such as all villages in a district), and all units of observation. One quick way to check for completeness is by visualizing the dataset to identify gaps.

For example, the figures below, shows the timeframes and locations where data on cell phone use is missing.

Consistency

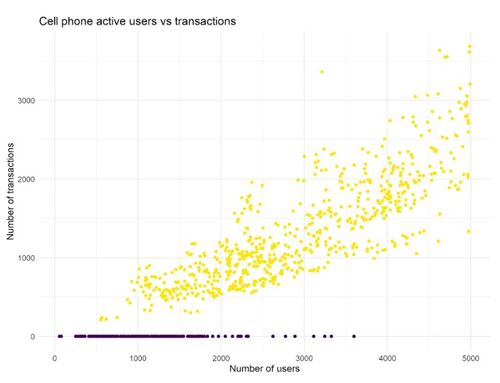

For each variable, make sure that the data is consistent for different rounds of data collection. For example, if a farmer reported that they sowed 1 hectare of maize in a baseline survey, but reported that they harvest 20 hectares of maize in an endline survey. Before receiving the data, use another source of data for these variables to note down expected ranges. Then once the data is received, check that for each variable, the data points fall within expected ranges.

For example, the figure below checks for consistency between the number of active users of cell phones, and the number of daily calls and messages. In this case, the points in purple are not consistent because every call or message must have a user who generates it.

Anomalous data points

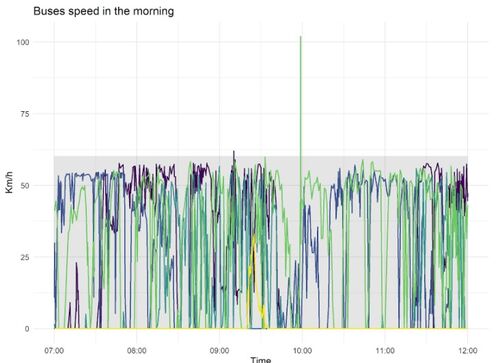

One of the most common ways to deal with anomalous data points (like outliers) is through carefully programmed questionnaires that set the accepted range and/or type of values for each question. However, there can still be outliers because of random issues during the survey. Use standard deviation as a benchmark - for instance, by flagging observations that are more than 2 standard deviations higher or lower than the mean. Regularly back check incoming data to also identify outliers. Visualizing the data points in this case can again help with identifying outliers.

For example, the figure below shows the speeds of 4 vehicles over time. Any data point that lies outside the gray area in this figure can be easily identified as an anomalous data point since it is more than 2 standard deviations over the mean.

Real-time

Real-time checks on quality can be done by discussing any issues that come up with the survey firm just a few hours after receiving the data from the field. However, it might not always be possible to do this, especially when using secondary data. In this case, run checks for completeness, consistency, and anomalous data points as soon as the data is received. Discuss the data points that are flagged in these checks in real-time to improve data quality.

Types

The following are the different categories of high frequency checks that research teams can use to improve data quality

- Response quality checks: These checks are designed to ensure that responses are consistent for a particular survey instrument, and that the responses fall within a particular range. For example, checks to ensure that all variables are standardized, and there are no outliers in the data. Share a daily log of such errors, and check if it is an issue with enumerators, in which case there might be a need to re-train enumerators.

- Enumerator checks: These are designed to check if data shared by any particular enumerator is significantly different from the data shared by other enumerators. Some parameters to check enumerator performance include percentage of "Don't know" responses, or average interview duration. In the first case, there might be a need to re-draft the questions, while in the second case, there might be a need to re-train enumerators.

- Programming checks: These test for issues in logic or skip patterns that were not spotted during questionnaire programming. These also include checking for data that is missing because the programmed version of the instrument skips certain questions. In this case, program the instrument again, and double-check for errors.

- Duplicates and survey log checks: These ensure that all the data collected from the field is on the server, and that there are no duplicates. In case some data is missing, or the survey forms are incomplete, share a report with the field team and identify the reasons for low completion rate. In case there are duplicate IDs, identify and resolve them.

- Other checks related to the project: These include checking if start date and end date of an interview are the same, or ensuring that there is at least one variable that has a unique ID. Further, in the case of administrative data, there can be daily checks to check and compare data with previous records, and ensure that participation in a study was only offered to those who were selected for treatment. For example, in an intervention involving cash transfers, check the details of the accounts to ensure that the account is in the name of a person who was selected for treatment.

Useful Tips

Keep the following in mind regarding high frequency checks (HFCs):

- Prepare HFCs before start of the survey. Prepare the code and instructions for HFCs before starting with field data collection.

- Regularly monitor and resolve errors. Monitor the reporting of errors, and deal with them daily.

- HFCs should be a one-click process. The HFCs should be a one-click process. IPA has created

ipacheck, a Stata package for running HFCs on research data. - Prioritize. It might not always be possible to perform all checks. So prioritize the ones that are most likely to affect data quality.

Related Pages

Click here for pages that link to this topic.

Additional Resources

- DIME Analytics (World Bank) Data Quality Checks

- DIME Analytics (World Bank), Assuring Data Quality

- Innovation for Poverty Action, Template for high frequency checks

- Innovation for Poverty Action, High frequency checks and

ipacheck - J-PAL, Data Quality Checks

- SurveyCTO, Data quality with SurveyCTO

- SurveyCTO, Monitoring and Visualization

- SurveyCTO, World Bank Researchers Teach How to Create High Frequency Checks Dashboards